Artificaly Intelligence (AI) for Libraries & Image Repositories

Open Source AI image metadata enrichment and distribution service for academic, state and national libraries to enable content discovery, image accessibility and aid content re-use

Open Source AI image metadata enrichment and distribution service for academic, state and national libraries to enable content discovery, image accessibility and aid content re-use

Using state-of-the-art computer vision technologies from Amazon AWS and Microsoft Azure to bring new depths and possibilities to the Library's digital image collection.

Identify and tag thousand of objects within an image. Identify image types and colour schemes in pictures. Recognise brands, celebrities and landmarks. Automatically catalogue the gender and age of people within image.

Enable vision impaired users greater accessibility to your image collection. Existing image repository records may have limited descriptive information which disadvantages vision impaired users when trying to understand, search and discovery visual content in your collection

Currently transcribing documents is an expensive endevour for libraries and limited on only the most important works. Biblio AI enable you to bulk transcribe your entire scanned historical document library using new AI solutions that are highly accurate even on handwritten historical documents such as maps, journals, notes, etc.

Not limited to classic library search platforms, Biblio AI can distribute your content directly to creator centric services to aid content re-use by non-traditional library users

Totally open-source witn no vendor lock-in and not tied to any specific services or providers. Any image repository provider, any library discover layer and whichever cloud/hybrid/on-prem IT architecture that suits you best

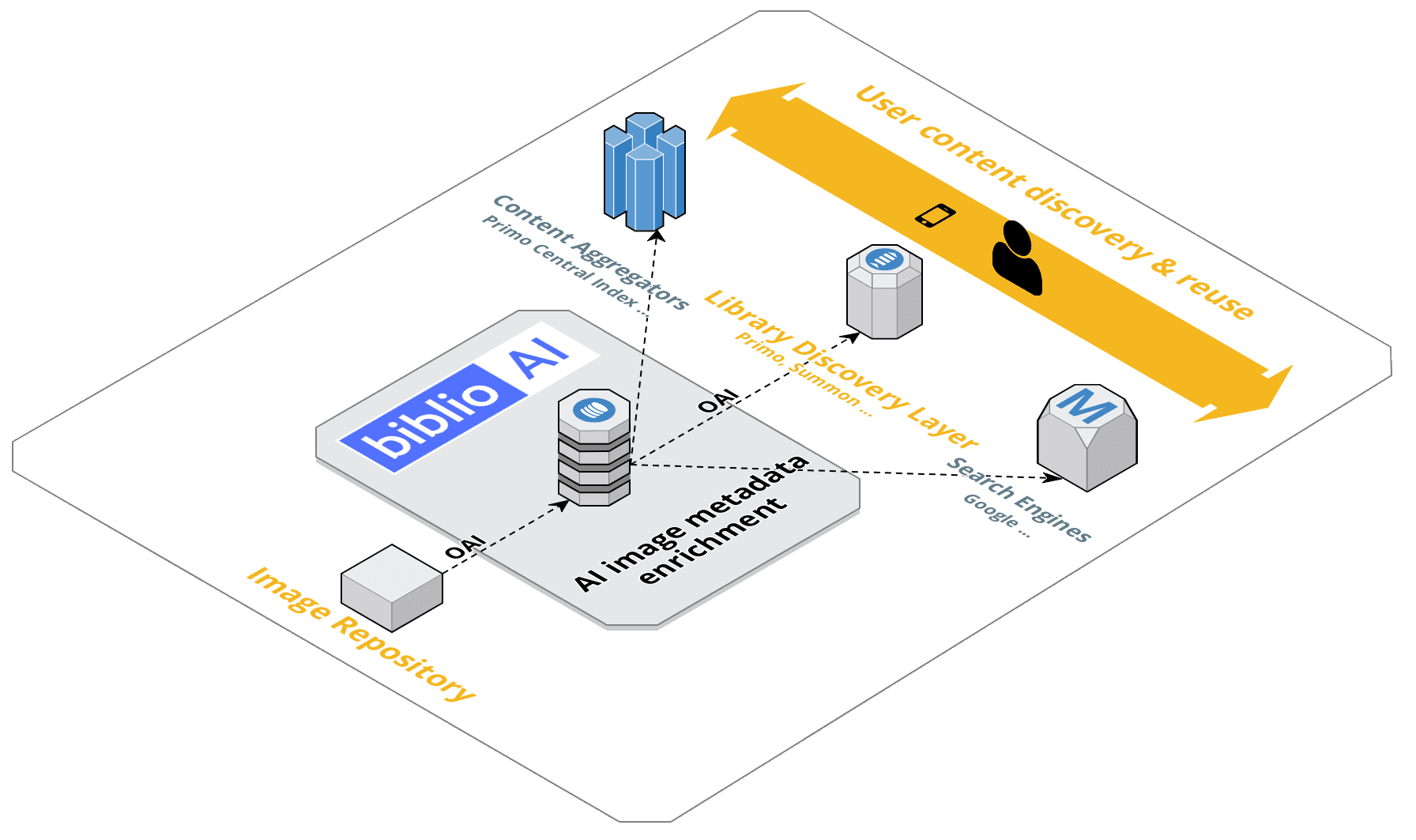

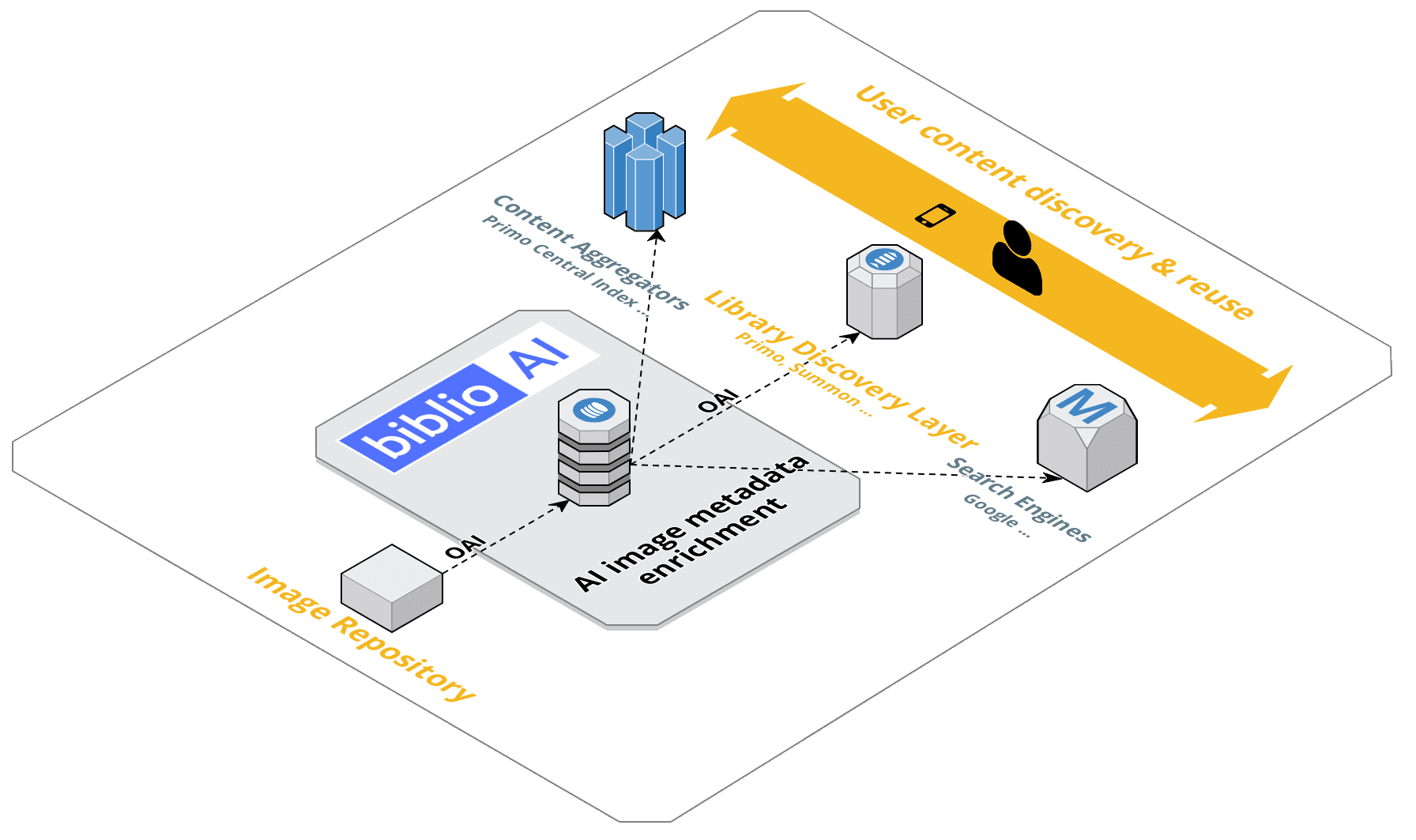

Biblio AI works with your existing Library Discovery Layer, library content aggregators (Primo Central Index ..), and APIs ready for search engine distribution

Biblio AI works with any image repository or library discovery layer that implements the repository interoperability standard OAI-PMH

Got a legacy image repository with no upgrade path? Biblio AI is a complimentary service that auto AI enables your agin image repository. Biblio AI is a drop in solution where no change is required to you existing image repository.

Developer

Support and Funding

Development Partner

Want to know more about Biblio AI ? Feel free to contact us on either of the above listed social options or on the contact form below